AI visual inspection vendors compared: how to pick the right one for your shop floor

According to BCG's 2025 AI adoption report, 74% of manufacturers that pilot AI visual inspection never move past the first production line. The technology works. The vendor choice is usually what breaks.

This pillar walks through the four categories of AI visual inspection vendors on the market today, the criteria that actually predict rollout success, and the questions a buyer should be asking on every demo call. It draws on four years of deployments at Enao Vision across injection molding, ceramics, PVC extrusion and manual assembly, plus direct comparisons we've lost and won against every major category.

The four categories of AI visual inspection vendors

Most of the "50 vendor" comparison posts on the internet lump very different products into one list. That is not useful when you are trying to pick one. The real market segments into four categories, each with a different buyer, a different pricing model and a different failure mode.

Enterprise machine vision platforms are the Cognex, Keyence and Omron archetypes. They bundle proprietary cameras, lighting controllers and rule-based plus deep-learning software into a complete stack. They work well when you have a single high-volume line, a predictable environment and the CapEx budget to match. They struggle when you have 20 different SKUs on the same line or when engineering changes happen every quarter.

Industrial camera plus software stacks combine a camera vendor like Basler or Framos with a software library like MVTec Halcon or Cognex VisionPro. This is the traditional integrator path. It gives you maximum flexibility but requires a system integrator or an internal vision engineer to assemble the pieces. Typical time to first inspection sits between three and nine months, and maintenance falls back on whoever assembled the stack.

AI-native cloud platforms emerged from the deep-learning wave. Landing AI, Clarifai and a handful of others offer browser-based labeling, cloud training and model deployment to industrial PCs. They reduce the ML barrier but usually still require fixed cameras, fixed lighting and manual handoff to shop floor operators.

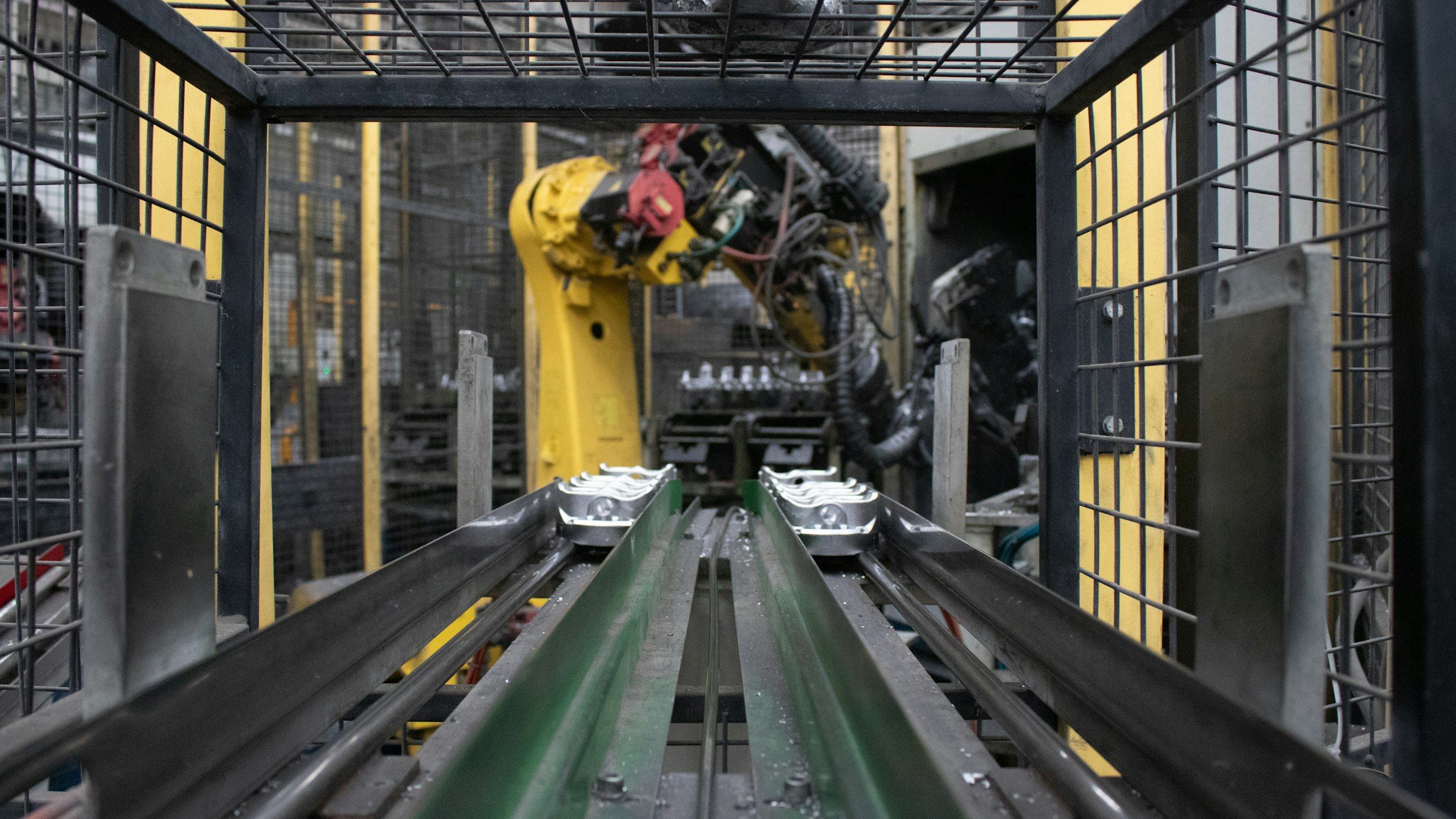

Mobile-first platforms are the newest category. Enao Vision is the most complete example. The inspection happens on an iPhone or iPad mounted to the line, the model trains on fewer than 10 images per defect class, and the operator is also the data labeller. No dedicated camera, no lighting rig, no server.

If you want a deeper look at the underlying architectures, our machine vision systems guide compares smart cameras, PC-based systems, embedded vision and fleet-based mobile inspection head to head.

The criteria that actually predict rollout success

Marketing pages focus on accuracy numbers. Accuracy is the easy part. The hard part is everything that has to go right before and after the model runs. Four criteria matter more than any benchmark.

Time to first deploy. How long between signing the contract and the first real inspection running on a line? Enterprise platforms land between 12 and 26 weeks. Integrator stacks land between 16 and 36 weeks. AI-native cloud platforms land between 6 and 16 weeks. Mobile-first platforms land in 1 to 3 weeks. Every additional week is a week of parts shipped without the guardrail.

Data requirements per defect class. Ask how many images the model needs per class to reach the vendor's claimed accuracy. Enterprise platforms and integrator stacks routinely quote 500 to 5,000 images per class, which kills any project with rare defects. AI-native platforms often quote 100 to 500. Mobile-first platforms built on few-shot and foundation-model backbones now work with 5 to 20 images per class. That single number decides whether your rollout takes weeks or quarters.

Hardware independence. If the vendor locks you into their cameras, their lights and their enclosures, your second line costs almost as much as your first. Hardware-independent vendors let you use commodity components and redeploy when line layout changes.

Change management reality. Who has to learn what, and how many of them? A platform that requires a vision engineer and a data scientist is fine if you have them. Most small and mid-sized manufacturers do not, which is why we wrote our guide on closing the quality control gap for manual assembly. Operators as labellers is the difference between a pilot that ships and a pilot that sits on a shelf.

Questions to ask on every demo

The five questions below flush out 90% of what vendor marketing pages hide.

Can you run the demo on my actual defect samples? If the answer is "send us 500 images and we will come back in four weeks", the vendor is not ready for your line. If the answer is "send us 10 images and we will show you tomorrow", the vendor is.

What happens when my line configuration changes? Every manufacturing line changes. New SKUs, new fixtures, new lighting conditions. Ask for the actual retraining workflow and the retraining downtime in hours.

Who owns the model weights? Some vendors treat the trained model as their IP. That matters when your quality head leaves and you want to switch platforms. Read the contract before the demo, not after.

What does pricing look like at two lines, five lines and 20 lines? CapEx pricing breaks at scale in one direction, subscription pricing breaks in the other. Both can work, but the break-even points are very different. Our CapEx to OpEx shift guide walks through the procurement model tradeoffs.

How do you handle lighting variation? A demo on a shielded optical table proves nothing. Ask how the model behaves with a 30% lux swing. Our lighting guide explains why a good AI system should not need a EUR 500 light.

Red flags that kill rollouts

Four patterns show up on every failed deployment we have been called in to fix.

Fixed lighting requirements that cannot be changed once hardware is installed. Minimum image quotas above 1,000 per defect class for rare defects. Six-month-plus timelines before the first inspection runs. Proprietary camera lock-in that means a second line duplicates the full CapEx.

If two or more of these show up in a vendor's standard deployment package, the chance of your pilot reaching production drops below 30%. Our setup-failure post has the full pattern library.

Vendor choice changes with context

There is no universal best vendor. Three contextual factors move the answer more than anything else.

Single line versus multi-plant rollout. Enterprise platforms with fixed cameras pay off on a single high-volume line running one SKU. Mobile-first platforms win on multi-line, multi-SKU rollouts where hardware cost compounds.

Greenfield versus brownfield. If you are designing a new line and can specify cameras, lighting and fixtures, the enterprise path is defensible. If you are retrofitting a line that has been running for ten years, hardware-independent platforms are usually the only option that reaches production.

In-house ML team or not. If you have data scientists and vision engineers, AI-native cloud platforms and integrator stacks give you the most control. If you do not, mobile-first platforms with operator-level labeling are the only category where the rollout does not block on hiring.

Where Enao fits

Enao Vision sits in the mobile-first category. Teams run inspection on an iPhone or iPad mounted to the line, use 5 to 20 images per defect class, and deploy the first line in 1 to 3 weeks. That trades specialized throughput for flexibility, which is the right trade for mid-sized manufacturers running 20+ SKUs across multiple lines. It is the wrong trade if you are running a single 5,000 parts-per-minute line with one product for a decade.

We are transparent about both sides. If your use case is outside our fit, we will say so on the first call. If it is inside, we will run your actual defect samples on a demo within 48 hours.

Next step

Pull three of the five demo questions above into your next vendor call. If any vendor cannot answer "can you run the demo on my samples this week" with yes, that tells you more than any G2 review. We publish our side of the answer openly and you can see it on the Enao Vision product page or book a demo with your own samples directly from there.

Evaluating vendors and want to compare shortlists with other teams? The vendor selection conversation happens in our community.

Get started

Want to see how Enao Vision works on your line? You can get started for free using an iPhone you already have, or join the community to compare notes with other quality and operations teams putting AI on the shopfloor.