AI quality control in 2026: what it must do and what to pay

AI quality control in 2026 means edge-deployed neural networks (defect detection, anomaly detection, or hybrid models) inspecting parts on the line in under 100 milliseconds, with false-negative rates under 0.5 percent and pilot costs under 5,000 euro per line. Adoption in German-speaking manufacturing has roughly doubled in two years, per Bitkom. The 2026 vendor bar combines paid 30-60 day pilots, OpEx licensing on monthly cancelability, iPhone-grade sensors, and shift-lead-aligned labels.

The adoption curve has pulled more vendors into the market than manufacturers can absorb. The fallout: a lot of pilots started on a demo recipe and sitting frozen after six months. This post is the cheat sheet for avoiding that, whether you sit in operations or in a quality assurance role.

What AI-powered inspection in 2026 must actually do

Three numbers are the 2026 bar that any AI-powered inspection vendor should meet. Inference latency under 100 milliseconds per part, false-negative rate under 0.5 percent, and integration cost under 5,000 euro per line for the initial pilot.

A vendor that cannot hold those thresholds is behind. Two reasons. First, modern edge models like Core ML on iPhone 15 Pro run inference in 50 to 80 milliseconds out of the box. Second, SaaS-style monthly licensing has pushed the market entry price down. Hitting all three numbers is the competitive advantage you want from an AI-assisted inspection station.

How does AI quality control fit into automation workflows?

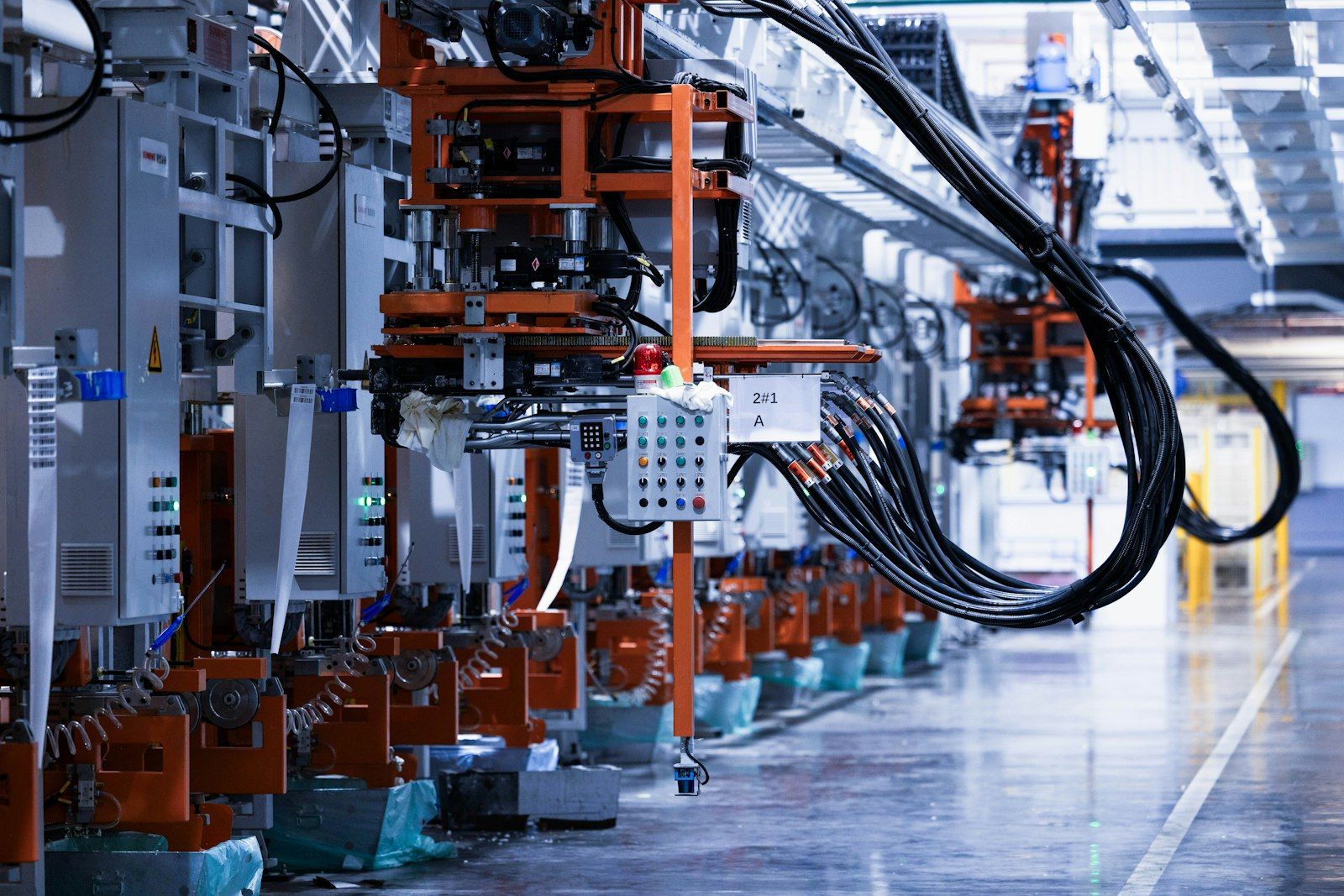

An AI-driven inspection station is one node in a larger automation stack. Real-world deployments connect the iPhone-as-sensor station to PLCs, MES, and IoT gateways, so detected quality issues trigger downstream actions in real-time: a reject arm, a stop signal, a logged event. The end-to-end pipeline runs on commodity hardware and standard machine learning frameworks, which keeps scalability honest as you add lines and as the lifecycle of each model gets longer.

Two integration patterns dominate in 2026. Pattern one is sensor-only: the AI system sends a Pass/Fail and a confidence score over MQTT or OPC UA, and your existing automation logic handles decision-making. Pattern two is closed-loop: AI tools also expose a shift-lead dashboard where operators confirm or override predictions in real-time, and those corrections feed the next training cycle through tight feedback loops. Closed-loop is the default for most production lines because it lets AI models improve without a vendor visit, and it is what turns a static inspection station into a set of self-improving pipelines.

Both patterns reduce manual workflows. The bottlenecks they address are the ones that used to make manual inspection time-consuming and inconsistent: re-checking the same part, re-training new operators, and chasing edge cases that only the senior shift lead can spot.

What three AI model types are in use today?

Three families of machine learning approach show up in serious manufacturing AI applications today (we go deeper on the first two in our anomaly versus defect detection guide).

Defect detection learns from labeled bad parts. That fits closed defect sets, for example the six AWS D1.1 weld classes or the top five SMT defect types.

The anomaly approach learns the good state and flags anything off. That fits cosmetic surface checks and any case with open defect classes.

Hybrid approaches combine both. Anomaly on Day 1 for broad coverage, a labeled-defect model from Day 30 for the top classes. This is the state of the art from serious vendors using AI in 2026, and it is how most AI applications in this space actually scale beyond the first line.

What datasets, frameworks, and algorithms power 2026 inspection?

The 2026 stack is narrower than the marketing suggests. Most production-grade AI systems and AI tools in this category use computer vision algorithms (typically a ResNet or EfficientNet backbone) for defect detection, and PatchCore or PaDiM for the anomaly side. The frameworks behind them are PyTorch and Apple Core ML for edge inference. Training datasets are smaller than vendors used to claim, with 200 to 2,000 labeled images per defect class as the realistic working range, not 100,000.

Two practical implications. One, you do not need a data lake to start; an iPhone capturing a few hundred parts per defect class is enough for a serious pilot. Two, when a vendor pitches generative AI or a custom large model for visual inspection, ask which open-source backbone it is fine-tuned from. The honest answer is almost always one of the four mentioned above. Anything else is a flag.

How to spot a serious AI vendor

Training data: a serious vendor is transparent about how many images per defect class they need and who labels them. A vendor that quotes 100,000 images as the baseline is not using a modern approach.

Pilot format: a paid pilot in 30 to 60 days with explicit KPIs. Validation should happen on production parts, not demo samples. An unserious pilot runs four months without KPIs and requires three of the vendor's engineers to live on-site.

Pricing: OpEx with monthly cancelability, not six-figure CapEx. Anyone in 2026 still selling a five-figure camera cabinet plus a service contract is priced like the previous decade.

Outputs: a serious vendor shows you the dashboards and the raw confidence scores, not only a Pass/Fail summary. You should be able to trace any decision back to the image, the model version, and the feedback loops that produced it. That traceability is what makes the AI systems on your floor audit-ready under emerging quality engineering and quality management standards.

For the full buyer's view, see our AI inspection software guide and the vendor comparison. Both walk through end-to-end use cases by industry, with screenshots from real artificial intelligence deployments.

What are the four most common AI pilot mistakes?

First, too broad. Piloting three lines simultaneously on Day 1 produces three non-comparable results. Start with one line and one defect class. Use cases that are narrow on Day 1 win.

Second, cutting out the shift lead. A model is only as good as the labels the shift lead approves. Pilots that do not bring the shift lead in during the pilot face rejection after it.

Third, no hard KPIs and no metrics. Without clear numbers set on Day 1, a pilot ends up in limbo where no one can call Go or No-Go.

Fourth, hardware overkill. Buying 40,000 euro of cameras for a pilot locks in CapEx for a technology that will be cheaper and better in 12 months.

What is new in 2026 for AI-driven inspection?

Edge models: inference runs locally on device, no data leaves the line. That resolves latency and data sovereignty in one move.

iPhone as sensor: consumer-grade optics is enough for roughly 80 percent of inspection cases. An iPhone 15 Pro delivers 50-millisecond inference at 99 percent accuracy, at a fraction of industrial camera cost.

Subscription licensing: pay per line per month, scale up or down, and do not own hardware that is outdated in 2027. The math is easy to optimize when every line is a separate cost line. This is also where AI inspection finally meets the rest of factory automation on equal terms: a per-line fee, not a one-off CapEx that locks you out of the next automation upgrade.

Tighter integration with quality management: the same artificial intelligence backbone that catches defects also feeds first-pass-yield reports, audit trails, and SPC charts. The line between AI inspection and broader quality assurance tooling is closing fast, which is why a 2026 buying decision should be evaluated against a three-year quality engineering roadmap, not a single defect class. Quality assurance teams that already run automation on the line have the easiest path: same hardware, same automation logic, just smarter Pass/Fail.

At Enao Vision the typical entry is an iPhone-based setup on an OpEx model starting around 500 euro per line per month, cancelable monthly, zero CapEx. The first model is in production after a five-day onboarding. Fine-tuning tips and high-quality recipes get shared in our community Slack.

The Bitkom 34 percent is a regional average. The top quartile of manufacturers already run two to five AI lines. If your site is still at zero, 2026 is the last year you can start without a competitive disadvantage.

Evaluating vendors or mid-pilot and want to sanity-check a recipe against other teams? Drop into our community.

Frequently asked questions about AI quality control in 2026

What is the minimum performance bar for AI-powered inspection in 2026?

Three thresholds. Inference latency under 100 milliseconds per part, false-negative rate under 0.5 percent on the agreed defect set, and pilot integration cost under 5,000 euro per line. Modern edge models on devices like iPhone 15 Pro hit 50-80 ms latency out of the box, so any vendor quoting hundreds of milliseconds is running on outdated tooling. Anything above 5,000 euro for an initial pilot is priced like the previous decade. These thresholds are the validation gate before you sign anything.

Defect detection or anomaly detection: which should I pick?

Pick by defect set. Defect detection learns from labeled bad parts and fits closed defect sets like the six AWS D1.1 weld classes or the top five SMT defect types. The anomaly approach learns the good state and flags anything off, which fits cosmetic surface checks where the defect set is open. The 2026 state of the art is hybrid: an anomaly model from Day 1 for broad coverage, then a labeled-defect model from Day 30 on the top classes. Hybrid is also the easiest to optimize as your label set grows.

How long should an AI quality control pilot run?

30 to 60 days, paid, with explicit KPIs and metrics agreed on Day 1. A pilot that runs four months without KPIs and requires three of the vendor's engineers on-site is not a 2026 pilot. Start with one line and one defect class. Decide Go or No-Go on numbers, not vibes. The shift lead must be in the loop the whole time, otherwise the model is rejected after the pilot regardless of accuracy.

Can an iPhone really replace industrial cameras for visual inspection?

For roughly 80 percent of cases, yes. An iPhone 15 Pro pairs a 48-megapixel sensor with on-device Core ML inference at 50 milliseconds and 99 percent accuracy on most cosmetic and assembly defects. Industrial cameras still win for hyperspectral, X-ray, sub-micron metrology, and very high-speed lines above 30 parts per second. For the rest, a refurbished iPhone plus a lamp and a mount keeps hardware under 1,000 euro per station.

Key takeaways

- The 2026 bar for AI inspection: under 100 ms inference, under 0.5 percent false-negative rate, under 5,000 euro pilot integration cost per line.

- Three model types in production: defect detection (closed defect sets), an anomaly model (open defect sets), and hybrid (state of the art).

- Serious vendor signals: transparent training-data needs, 30-60 day paid pilot with explicit KPIs, OpEx licensing with monthly cancelability, every prediction traceable to image and model version.

- Four pilot mistakes to avoid: piloting too broad, cutting out the shift lead, no hard KPIs, and hardware overkill.

- What is new in 2026: on-device edge models, iPhone-grade sensors covering 80 percent of inspection cases, per-line subscription licensing replacing six-figure CapEx, and tighter integration into quality management workflows.

Get started

Want to see how Enao Vision works on your line? You can get started for free using an iPhone you already have, or join the community to compare notes with other quality and operations teams putting AI on the shopfloor.